This post centres around openness — what happens in 10 years when I want to access my stuff on my proprietry computer?

Let’s open it up with a quote that sums up where I stand. Concluding his followup article on Apple’s seeming non-openness of its x86 Darwin OS on which Mac OS X is built, Tom Yager said

The Mac platform is an overflowing basket of raw materials for innovators and creators of all stripes. It’s what Steve Jobs would fantasize about if he still worked out of his garage, and you can bet that he’d be livid to find that the vendor locked some portion of his chosen platform behind a gate without a word of notice or explanation.

Here’s the thing. I don’t think those would be good odds. “I could bet” SJ wants an open Apple? It’s the one question I’d love to know answered, but one I’m certain I’ll never know for sure. There’s no indication either way that Apple, and by personification, Steve Jobs, does care that much about locking up my data behind a gate, even if that gate doesn’t slam shut for another 10 years.

Mark Pilgrim started it all when he decided to switch away from Apple to Linux. John Gruber then wrote exhaustingly on the topic. Both make good points. Mark feels too locked in by Mac OS X (and Apple’s associated software), with the horrible thought that at some stage, his work or his life will be unable to be retrieved due to some poorly thought out software decision. The short of it: Apple only cares that software works now, not that what you produce with it will be useful in the far future.

John agrees that it’s a good point, going off for a while (justifiably, given his audience) on a big tangent how it’s a valid point to have. Data openness overrides any aesthetic considerations of Apple’s hardware or software. (And even then we all know Apple’s software can be pretty hideous at times.) John isn’t sure that Mark’s reaction is entirely the right decision:

…if Apple’s lack of openness were a disaster in the making, it (the disaster) would have occurred already.

But I disagree with this point. Only in the last half-dozen years has digital input and storage increased to such an extent that it’s not unreasonable to consider storing hundreds of thousands of photos on a computer in easily browsable form. Let alone self-edited home movies. The ease with which it is possible facilitates a much greater creative output than when we stored video on VHS and photos in shoeboxes.

People concerned with keeping their digitally written documents in a long-term storable form forsook Microsoft Word years ago, flocking to more stable formats such as SGML and LaTeX.

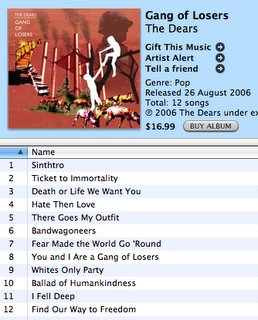

A larger (in my eyes) can of worms opens up when you consider digital music. This isn’t stuff you create yourself any more; now we’re talking about cash being transferred for media that you seemingly own. I’m scared to buy music from iTunes, for two reasons: it’s so god-damn easy, it’s addictive. But that’s a trivial reason. The second is more serious: what happens if all that lovely copy-protected music is rendered unreadable for one of a variety of reasons?

(The same argument holds for DVDs, one of the reasons I’ve stopped buying them. What the hell do I do with a whole collection of movies that will only play in Australian DVD players if I move to another country?)

And I’m talking about legal options here. If iTunes DRM is easy to crack now, then adjust my argument for something more hole-proof, such as Windows Media.

These issues literally make me scared of using computers, because it’s conceivable — to me — that I might wind up in a spiral of doom in which I end up with the choice of either abandoning my stuff or using the exact same system (same hardware, same software) in perpetuity. This later case clearly isn’t tenable.

So, what to do? The solution for music is to buy MP3s from emusic.com, or buy unprotected CDs from which to rip. This situation might not last forever, but I hope it does. Larger problems loom for movies.

The solution for software isn’t so clear. Software companies, in the ideal case, would acknowledge that they’re not omnipotent and won’t be around forever; the formats they use to store their information should be totally transparent. In the short term, that requires careful choice of software to use: I guess, then, that it’d be advisable to ditch iLife, et al., until Apple as a company starts supporting these issues. But then you lose iTunes, and that means trouble getting an iPod to work…so for now I just suck it up.

In the end, you’ve just got to have enough faith that someone, somewhere, sometime will code up an exporter for any data that’s been irrevocably locked away in some terrible future.

...but so messy. I don't think I'm ever going to clean my shirt. Here're my lovely chocolate compatriots:

...but so messy. I don't think I'm ever going to clean my shirt. Here're my lovely chocolate compatriots:

And Lauren's success with big biting. I'm still munching on that block, days and days later. Yum.

And Lauren's success with big biting. I'm still munching on that block, days and days later. Yum.

Happy holidays; mine so far have sure been great.

Happy holidays; mine so far have sure been great.